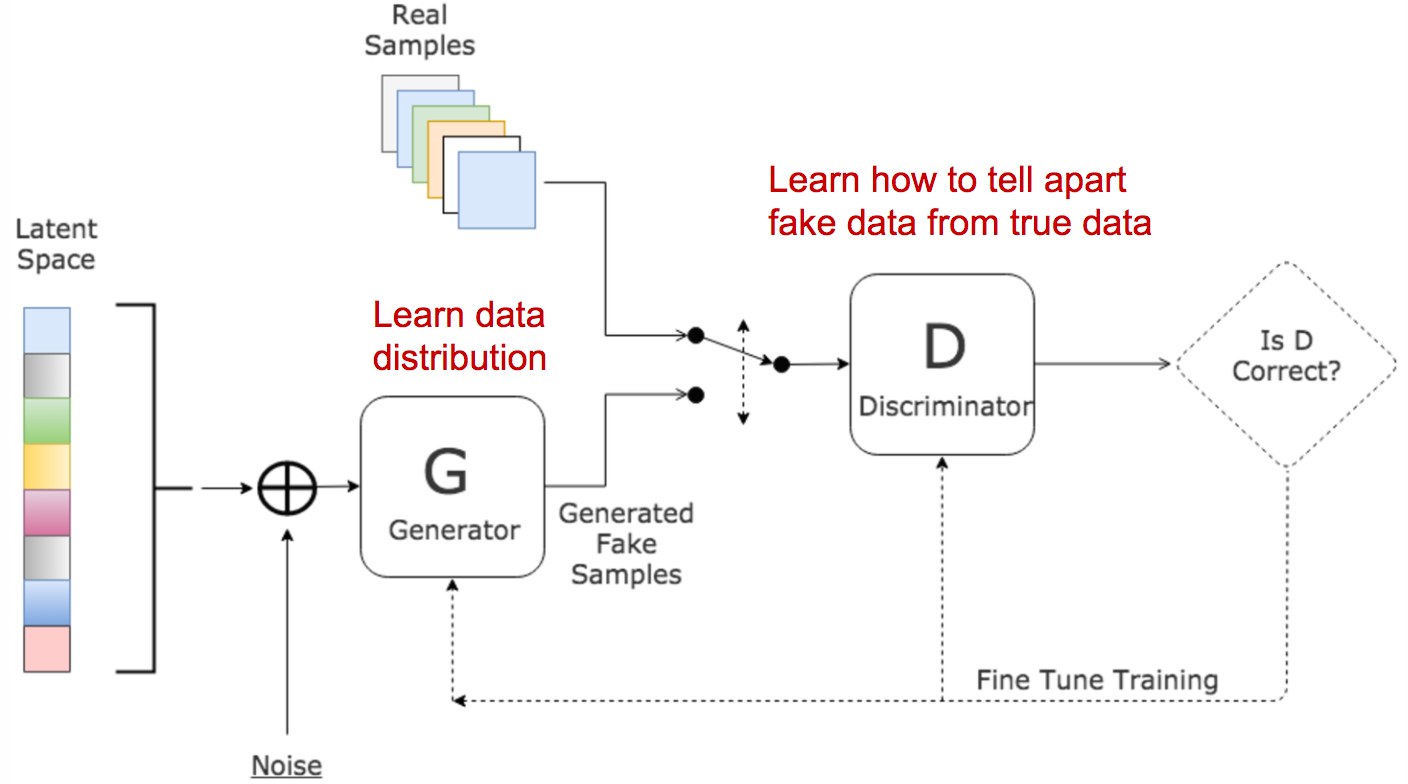

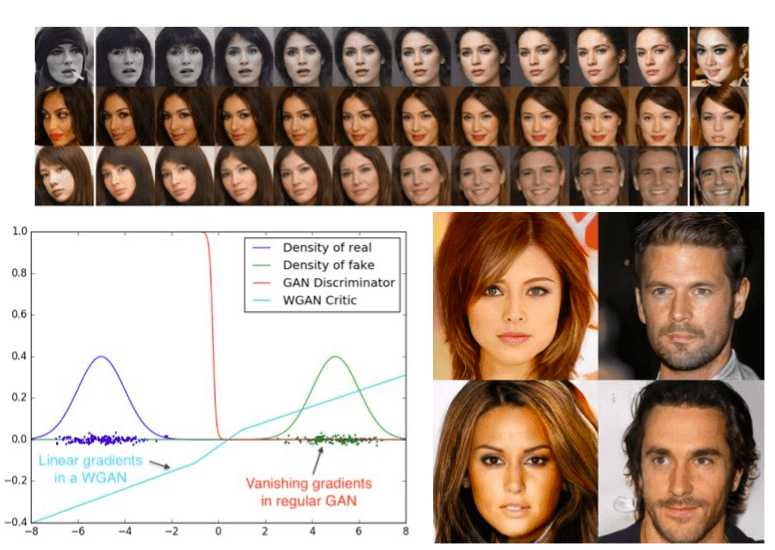

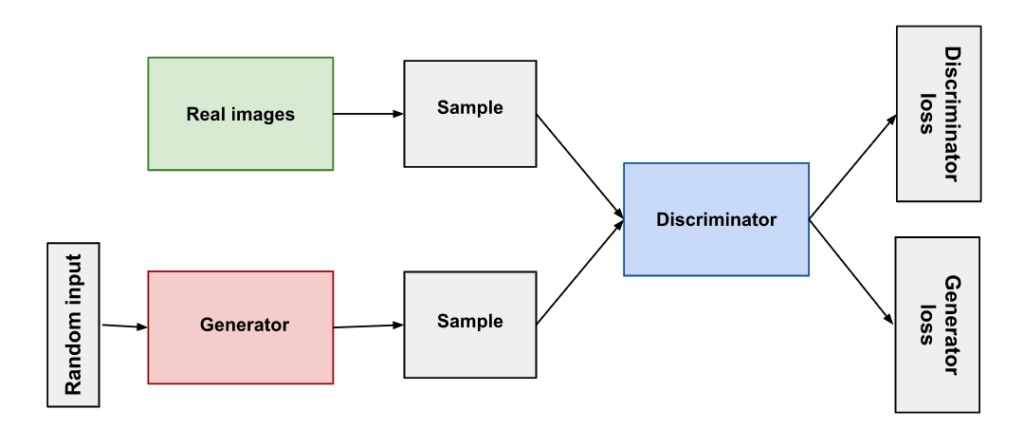

[Updated on 2018-09-30: thanks to Yoonju, we have this post translated in Korean!] [Updated on 2019-04-18: this post is also available on arXiv.] Generative adversarial network (GAN) has shown great results in many generative tasks to replicate the real-world rich content such as images, human language, and music. It is inspired by game theory: two models, a generator and a critic, are competing with each other while making each other stronger at the same time.

1705.02438] Face Super-Resolution Through Wasserstein GANs

Wasserstein GAN

GitHub - dhyaaalayed/wgan-gaussian: An implementation of Wasserstein GAN to generate 5 different Gaussian distributions

Applied Sciences, Free Full-Text

GANs in computer vision - Improved training with Wasserstein distance, game theory control and progressively growing schemes

CS 182: Lecture 19: Part 3: GANs

WGAN-GP Loss Explained

WGAN: Wasserstein Generative Adversarial Networks

Advancements in GAN Discriminator Models: From Vanishing Gradient to WGAN-GP – CryptLabs

PDF] From GAN to WGAN

4. Generative Adversarial Networks - Generative Deep Learning [Book]

What is the practical difference between the traditional GAN formulation and the Wasserstein GAN? - Quora

How can I calculate this steadily decreasing WGAN metric? - PyTorch Forums

python - Wasserstein loss can be negative? - Stack Overflow

/product/74/552894/1.jpg?1592)